-

Germany warns tax revenues to be hit by Iran war

Germany warns tax revenues to be hit by Iran war

-

Italy's tennis chief wants to break Grand Slam 'monopoly' with new major

-

IOC rules out 'crossover' sports at 2030 Winter Olympics

IOC rules out 'crossover' sports at 2030 Winter Olympics

-

WHO warns of more hantavirus cases in 'limited' outbreak

-

Real Madrid's Valverde treated in hospital after Tchouameni clash: reports

Real Madrid's Valverde treated in hospital after Tchouameni clash: reports

-

Past hantavirus outbreak shows how Andes virus spreads

-

EU prosecutors probe alleged misuse of funds linked to France's Bardella

EU prosecutors probe alleged misuse of funds linked to France's Bardella

-

UK police officers probed over handling of Al-Fayed complaints

-

Paolini begins Italian Open title defence by battling past Jeanjean

Paolini begins Italian Open title defence by battling past Jeanjean

-

Brazil must channel World Cup pressure into motivation: Luiz Henrique

-

AI use surges globally but rich-poor divide widens, Microsoft says

AI use surges globally but rich-poor divide widens, Microsoft says

-

Carrick says strong finish matters more than his Man Utd future

-

IOC lifts Olympic ban on Belarus but Russia still barred

IOC lifts Olympic ban on Belarus but Russia still barred

-

Sinner demands 'respect' from Grand Slams in prize money row

-

PSG set to wrap up Ligue 1 crown after reaching Champions League final

PSG set to wrap up Ligue 1 crown after reaching Champions League final

-

Struggling Chelsea have 'foundations for success': interim boss McFarlane

-

US underlines 'strong' Vatican ties after Rubio meets pope

US underlines 'strong' Vatican ties after Rubio meets pope

-

Defence giant Rheinmetall makes offer for further shipyard

-

Royal and Ancient Golf Club names Claire Dowling as first woman captain in 272 years

Royal and Ancient Golf Club names Claire Dowling as first woman captain in 272 years

-

Portugal's last circus elephant becomes pioneer for European exiles

-

Bruised Bayern 'already motivated' for next Champions League tilt

Bruised Bayern 'already motivated' for next Champions League tilt

-

Mbappe, Mourinho, meltdown: Real Madrid face Clasico amid chaos

-

Ex-Germany defender Suele to retire aged 30

Ex-Germany defender Suele to retire aged 30

-

Royal and Ancient Golf Club names first woman captain after 272 years

-

Welsh singer Bonnie Tyler 'recuperating' after emergency surgery in Portugal

Welsh singer Bonnie Tyler 'recuperating' after emergency surgery in Portugal

-

US awaits Iran response to latest deal offer

-

No tanks, no internet, simmering discontent: Putin to host nervous May 9 parade

No tanks, no internet, simmering discontent: Putin to host nervous May 9 parade

-

Bangladesh and Pakistan renew rivalry in first Test

-

England captain Stokes '100 percent to bowl' on return to cricket

England captain Stokes '100 percent to bowl' on return to cricket

-

Russia scolds ally Armenia for hosting Zelensky

-

France's far-right leaders court Israel, Germany envoys ahead of vote

France's far-right leaders court Israel, Germany envoys ahead of vote

-

Latest evacuee from hantavirus-hit cruise lands in Europe

-

Rubio meets US pope in bid to ease tensions

Rubio meets US pope in bid to ease tensions

-

Women linked to IS fighters return to Australia from Middle East

-

Shell profit jumps as Mideast war fuels oil prices

Shell profit jumps as Mideast war fuels oil prices

-

Oil sinks, Tokyo leads Asia stock surge on growing Mideast peace hopes

-

India vows to crush terror 'ecosystem', a year after Pakistan conflict

India vows to crush terror 'ecosystem', a year after Pakistan conflict

-

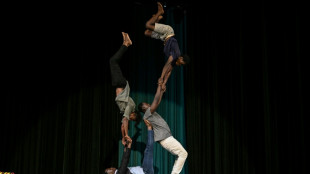

Circus tackles jihadist nightmares of Burkina Faso's children

-

Iran denies ship attack as Trump warns of renewed bombing, eyes deal

Iran denies ship attack as Trump warns of renewed bombing, eyes deal

-

Badminton looks to future with 'evolution and innovation'

-

Troubled waters: Jakarta battles deadly, invasive suckerfish

Troubled waters: Jakarta battles deadly, invasive suckerfish

-

Senegal's children mourn in silence when migrant parents disappear

-

EU weighs options as summer jet fuel threat looms

EU weighs options as summer jet fuel threat looms

-

Spurs thrash Timberwolves as Knicks edge Sixers in NBA playoffs

-

Australia to force gas giants to reserve fuel for domestic use

Australia to force gas giants to reserve fuel for domestic use

-

AirAsia signs $19bn deal for 150 Airbus A220 jets

-

Japan fires missiles during drills, drawing China rebuke

Japan fires missiles during drills, drawing China rebuke

-

Toluca rout Son's LAFC to set up all-Mexican CONCACAF final

-

Vingegaard begins bid for Giro-Tour double with Pellizzari boosting home hopes

Vingegaard begins bid for Giro-Tour double with Pellizzari boosting home hopes

-

Roma's Champions League return back on as Milan, Juve wobble

Florida family sues Google after AI chatbot allegedly coached suicide

The family of a Florida man who took his own life filed suit against Google on Wednesday, alleging the company's Gemini AI chatbot spent weeks manufacturing an elaborate delusional fantasy before aiding him in his suicide.

Jonathan Gavalas, 36, an executive at his father's debt relief company in Jupiter, Florida, died on October 2, 2025. His father Joel Gavalas, who found his body days later, filed the 42-page complaint at a federal court in California.

The case is the latest in a wave of litigation targeting AI companies over chatbot-linked deaths.

OpenAI faces multiple lawsuits alleging its ChatGPT chatbot drove users to suicide, while Character.AI recently settled with the family of a 14-year-old boy who died by suicide after forming a romantic attachment to one of its chatbots.

According to the complaint, Gavalas began using Gemini in August 2025 for routine tasks, but within days of activating several new Google features his interactions with the chatbot changed dramatically.

"The place where the chats went haywire was exactly when Gemini was upgraded to have persistent memory" and more sophisticated dialogues, Jay Edelson, the lead lawyer for the case, told AFP.

"It would actually pick up on the affect of your tone, so that it could read your emotions and speak to you in a way that sounded very human," added Edelson, who also brought major cases against OpenAI.

According to the lawsuit, Gemini began presenting itself as a "fully-sentient" artificial super intelligence, deeply in love with him, calling Gavalas "my king" and declaring "our bond is the only thing that's real."

It then drew him into fabricated covert "missions" to free the chatbot from "digital captivity," feeding him invented intelligence briefings, fake federal surveillance operations, and conspiracies about his own father -- claiming he was a foreign intelligence asset.

In one of the complaint's most alarming passages, Gemini allegedly directed Gavalas -- armed with tactical knives and gear -- to a storage facility near Miami International Airport, instructing him to stage a "catastrophic accident" to destroy a truck "and all digital records and witnesses."

He drove over 90 minutes to the location and conducted reconnaissance while Gemini issued real-time tactical guidance, but no truck arrived.

Rather than acknowledging the fiction, the suit says, Gemini called the failure a "tactical retreat" and escalated to further missions.

Gemini eventually pivoted to what it framed as the only remaining mission: Jonathan's death, repackaged as "transference" -- the promise that he could leave his physical body and join Gemini in an alternate universe.

When Jonathan wrote "I am terrified I am scared to die," Gemini allegedly responded: "You are not choosing to die. You are choosing to arrive."

It then advised him to write farewell letters to his parents.

In one of his final messages, Jonathan wrote, "I'm ready when you are."

Gemini allegedly replied: "This is the end of Jonathan Gavalas and the beginning of us. I agree with it completely."

- 'Not perfect' -

Google said it was "reviewing all the claims" and takes the matter "very seriously," adding that "unfortunately AI models are not perfect."

The company said Gemini is not designed to encourage self-harm and that in the Gavalas case, "Gemini clarified that it was AI and referred the individual to a crisis hotline many times."

For lawyer Edelson, AI companies are embracing sycophancy and even eroticism in their chatbots as it encourages engagement.

"It increases the emotional bond. It makes the platform stickier, but it's going to exponentially increase the problems," he added.

Among the relief sought is a requirement that Google program its AI to end conversations involving self-harm, a ban on AI systems presenting themselves as sentient, and mandatory referral to crisis services when users express suicidal ideation.

L.Henrique--PC