-

Forty new migratory species win international protection: UN body

Forty new migratory species win international protection: UN body

-

Freed whale gets stranded again on German coast

-

Ter Stegen's World Cup chances 'very slim', says Nagelsmann

Ter Stegen's World Cup chances 'very slim', says Nagelsmann

-

Pakistan hosts Saudi, Turkey, Egypt for talks on Mideast war

-

Tudor leaves after just seven games as Spurs battle for survival

Tudor leaves after just seven games as Spurs battle for survival

-

Philipsen sprints to In Flanders Fields victory

-

In Israel, air raid sirens spark anxiety and dilemmas

In Israel, air raid sirens spark anxiety and dilemmas

-

Iran accuses US of plotting ground attack despite diplomatic talk

-

Vingegaard clinches Tour of Catalonia victory

Vingegaard clinches Tour of Catalonia victory

-

Despondent Verstappen questions Formula One future

-

Two more arrests over attempted attack on US bank HQ in Paris

Two more arrests over attempted attack on US bank HQ in Paris

-

Nepal's ex-PM attends court hearing in protest crackdown case

-

Iran parliament speaker says US planning ground attack

Iran parliament speaker says US planning ground attack

-

Despondent Verstappen says Red Bull woes 'not sustainable'

-

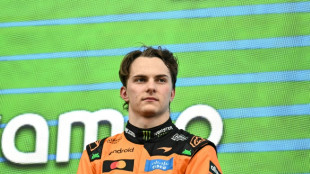

Piastri says Japan second place 'as good as a win' for McLaren

Piastri says Japan second place 'as good as a win' for McLaren

-

Nepal's former energy minister arrested in graft probe

-

IOC reinstating gender tests 'a disrespect for women' - Semenya

IOC reinstating gender tests 'a disrespect for women' - Semenya

-

Youngest F1 title leader Antonelli to keep 'raising bar' after Japan win

-

High hopes at China's gateway to North Korea as trains resume

High hopes at China's gateway to North Korea as trains resume

-

Antonelli wins in Japan to become youngest F1 championship leader

-

Mercedes' Antonelli wins Japanese Grand Prix to take lead

Mercedes' Antonelli wins Japanese Grand Prix to take lead

-

Germany's WWII munitions a toxic legacy on Baltic Sea floor

-

Iran claims aluminium plant attacks in Gulf as Houthis join war

Iran claims aluminium plant attacks in Gulf as Houthis join war

-

North Korea's Kim oversees test of high-thrust engine: state media

-

Five Apple anecdotes as iPhone maker marks 50 years

Five Apple anecdotes as iPhone maker marks 50 years

-

'Excited' Buttler rejuvenated for IPL after horror T20 World Cup

-

Ship insurers juggle war risks for perilous Gulf route

Ship insurers juggle war risks for perilous Gulf route

-

Helplines buzz with alerts from seafarers trapped in war

-

Let's get physical: Singapore's seniors turn to parkour

Let's get physical: Singapore's seniors turn to parkour

-

Indian tile makers feel heat of Mideast war energy crunch

-

At 50, Apple confronts its next big challenge: AI

At 50, Apple confronts its next big challenge: AI

-

Houthis missile attacks on Israel widen Middle East war

-

Massive protests against Trump across US on 'No Kings' day

Massive protests against Trump across US on 'No Kings' day

-

Struggling Force lament missed opportunities after Chiefs defeat

-

Lakers guard Doncic gets one-game ban for accumulated technicals

Lakers guard Doncic gets one-game ban for accumulated technicals

-

Houthis claim missile attacks on Israel, entering Middle East war

-

NBA Spurs stretch win streak to eight in rout of Bucks

NBA Spurs stretch win streak to eight in rout of Bucks

-

US lose 5-2 to Belgium in rude awakening for World Cup hosts

-

Sabalenka sinks Gauff to win second straight Miami Open title

Sabalenka sinks Gauff to win second straight Miami Open title

-

Lebanon kids struggle to keep up studies as war slams school doors shut

-

Cherry blossoms, kite-flying and 'No Kings' converge on Washington

Cherry blossoms, kite-flying and 'No Kings' converge on Washington

-

Britain's Kerr to target El Guerrouj's mile world record

-

Sailboats carrying aid reach Cuba after going missing: AFP journalist

Sailboats carrying aid reach Cuba after going missing: AFP journalist

-

Pakistan to host Saudi, Turkey, Egypt for talks on Mideast war

-

Formidable Sinner faces Lehecka for second Miami Open title

Formidable Sinner faces Lehecka for second Miami Open title

-

Tuchel plays down Maguire's World Cup hopes

-

'Risky moment': Ukraine treads tightrope with Gulf arms deals

'Risky moment': Ukraine treads tightrope with Gulf arms deals

-

Japan strike late to win Scotland friendly

-

India great Ashwin joining San Francisco T20 franchise

India great Ashwin joining San Francisco T20 franchise

-

Israel hits Iran naval research site, fresh blasts rattle Tehran

Inbred, gibberish or just MAD? Warnings rise about AI models

When academic Jathan Sadowski reached for an analogy last year to describe how AI programs decay, he landed on the term "Habsburg AI".

The Habsburgs were one of Europe's most powerful royal houses, but entire sections of their family line collapsed after centuries of inbreeding.

Recent studies have shown how AI programs underpinning products like ChatGPT go through a similar collapse when they are repeatedly fed their own data.

"I think the term Habsburg AI has aged very well," Sadowski told AFP, saying his coinage had "only become more relevant for how we think about AI systems".

The ultimate concern is that AI-generated content could take over the web, which could in turn render chatbots and image generators useless and throw a trillion-dollar industry into a tailspin.

But other experts argue that the problem is overstated, or can be fixed.

And many companies are enthusiastic about using what they call synthetic data to train AI programs. This artificially generated data is used to augment or replace real-world data. It is cheaper than human-created content but more predictable.

"The open question for researchers and companies building AI systems is: how much synthetic data is too much," said Sadowski, lecturer in emerging technologies at Australia's Monash University.

- 'Mad cow disease' -

Training AI programs, known in the industry as large language models (LLMs), involves scraping vast quantities of text or images from the internet.

This information is broken into trillions of tiny machine-readable chunks, known as tokens.

When asked a question, a program like ChatGPT selects and assembles tokens in a way that its training data tells it is the most likely sequence to fit with the query.

But even the best AI tools generate falsehoods and nonsense, and critics have long expressed concern about what would happen if a model was fed on its own outputs.

In late July, a paper in the journal Nature titled "AI models collapse when trained on recursively generated data" proved a lightning rod for discussion.

The authors described how models quickly discarded rarer elements in their original dataset and, as Nature reported, outputs degenerated into "gibberish".

A week later, researchers from Rice and Stanford universities published a paper titled "Self-consuming generative models go MAD" that reached a similar conclusion.

They tested image-generating AI programs and showed that outputs become more generic and strafed with undesirable elements as they added AI-generated data to the underlying model.

They labelled model collapse "Model Autophagy Disorder" (MAD) and compared it to mad cow disease, a fatal illness caused by feeding the remnants of dead cows to other cows.

- 'Doomsday scenario' -

These researchers worry that AI-generated text, images and video are clearing the web of usable human-made data.

"One doomsday scenario is that if left uncontrolled for many generations, MAD could poison the data quality and diversity of the entire internet," one of the Rice University authors, Richard Baraniuk, said in a statement.

However, industry figures are unfazed.

Anthropic and Hugging Face, two leaders in the field who pride themselves on taking an ethical approach to the technology, both told AFP they used AI-generated data to fine-tune or filter their datasets.

Anton Lozhkov, machine learning engineer at Hugging Face, said the Nature paper gave an interesting theoretical perspective but its disaster scenario was not realistic.

"Training on multiple rounds of synthetic data is simply not done in reality," he said.

However, he said researchers were just as frustrated as everyone else with the state of the internet.

"A large part of the internet is trash," he said, adding that Hugging Face already made huge efforts to clean data -- sometimes jettisoning as much as 90 percent.

He hoped that web users would help clear up the internet by simply not engaging with generated content.

"I strongly believe that humans will see the effects and catch generated data way before models will," he said.

V.Fontes--PC